Last year I tried quite a few new things, and many of those things were quite a bit fun so I will continue experimenting with them. One of such fun thing was a different type of online learning, when I don’t just watch videos and class material already shared at e.g. MIT OpenCourseware and Stanford’s Youtube channel, but I’m actually part of a class, doing real homework, working with real deadlines, taking real exams in the end. And hopefully getting real knowledge too (though that part depends on me more than on the class).

It was possible because Stanford announced 3 online classes for their 2011 Fall Semester: Databases, Machine Learning and Artificial Intelligence. All three looked very interesting so I signed up for each of them. Since the 2012 Spring Semester will see even more classes, I just take some notes here, how this first truly large scale experiment went.

Stanford classes 2011 fall

Databases

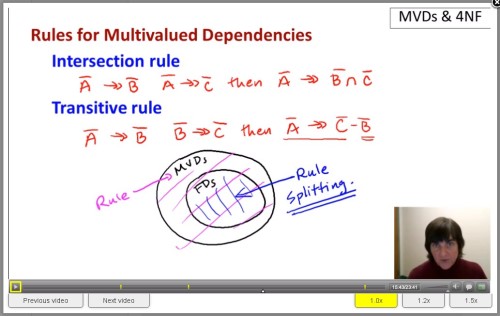

This class was the one I was most hesitating if it’s interesting enough to sign up, but I’m really glad I did.

Professor Jennifer Widom turned out to be an excellent teacher, and the material was also very interesting and fun to work with. Their team came up with pretty good infrastructure for the videos, exercises and exams as well. It’s really impressive what they have built in such a short time, under the pressure of tens of thousands of students relying on them (as much as I remember, about 90.000 signed up, though maybe ~30.000 had enough work done by the end to have any score and certificate of accomplishment).

Things were thoroughly explained, the exercises were usually challenging enough to make me think and it was all the excitement of solving puzzles. The exams covered a lot of material, and I didn’t score as high as I expected, though I think almost all the losses can be explained by me not paying enough attention to the questions, or misunderstanding/second-guessing myself.

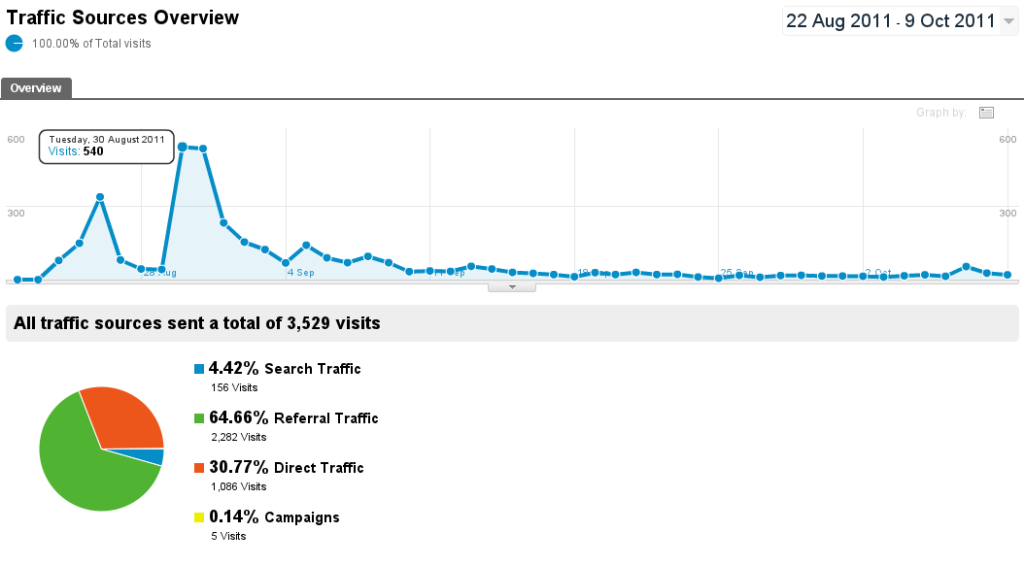

In the end I had a score of 308 out of 323 (which, looking at the statistics, in the 60-70 percentile). On the other hand, the best part is that I could almost immediately use many of the ideas I learned there about SQL, and the different ways of thinking about databases.

Machine Learning

This one I hesitated about, because I thought there must be too much overlap with the AI class, but actually they had pretty different aim.

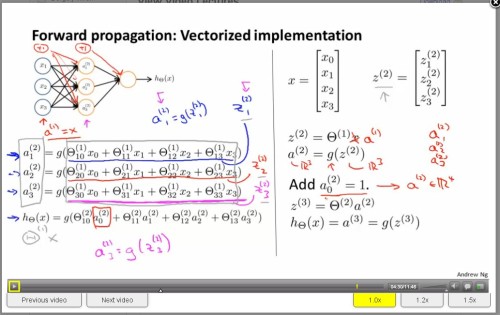

Taught by Professor Andrew Ng, this class really went into practical implementations, so could even be called “Machine Learning for the working professional”. Lots of ideas and good explanation how to implement regression, classification, neural networks, and all of these applied to a number of different topics.

From what I’ve seen, some people complained that the programming exercises were too easy, and indeed usually it could be solved in a few lines – for those who mostly already know how to solve it. From experience, my friends who asked me to study a bit together were having much harder time. I’d think more about those exercises as blueprints and guidelines if someone really want to implement some of the algorithms in a real setting.

This class used pretty similar architecture than Databases: 10-18 minutes of videos, 2-4 of those for one class. It was a nice touch that I could speed up the sound, so I was listening to all of these and the other classes at 1.5x speed – somehow that was just the right pace.

In the end I had 79.25/80 for the review questions (forgot to go back to the last one to correct it) and 800/800 for the programming exercises. The best result, though, is that now I’m thinking a lot what data do I have that can be hacked on with the tools I acquired.

Artificial Intelligence

This one was the first class that was advertised, and the most popular, probably because both of the topic and the lectures.

If the other two courses were pretty similar in setting and tech, this one was very different from them in almost all respect. There were two lecturers, Sebastian Thrun and Peter Norvig; they were using short, <1 minute to 4 minute videos hosted on Youtube, and up to about 30 of them for each class. Instead of using slides and tablets to “write” on those, they used actual paper and pen, and occasional printouts.

The low-tech presentation was okay most of the time, and it was easy enough to follow what’s happening, but the first couple of classes (before they got the hang of it) was pretty hard to see sometimes.

The teaching skills were not really equal: Professor Thrun explained things really well, I enjoyed his classes a lot and was a quite easy to follow, and his enthusiasm is very contagious. This probably explains what he did after the classes finished – but let’s come back to this later.

Professor Norvig on the other hand, was pretty difficult to follow, jumping between topics and explanations, often felt like he was (probably unintentionally) making things much harder than they really are. Some times the quiz questions asked about things that was said in a rather misleading way or it wasn’t explained yet. On the forums plenty of people complained about it, and I was a bit upset too (how can they make those score count into the final result if they doing it so badly?), but in the end it felt I was often just excusing myself from thinking deep enough about the given problems. It’s Stanford after all, don’t look for shortcuts just fight through.

It was interesting to see how they covered most of the Machine Learning class’ material in 2 lectures, and many of the topics were a bit rushed as well, since there was just so much to say. On the other hand, I had enough initiation to loads of topics and ideas to have a feeling where to follow up if I wanted to.

With score of 95.6% I was apparently in the top 25%, which means there was a really tough crowd taking this class. I have plenty to think about as well, and more hacking ideas.

Future

Of course learning does not stop here, I think it is just an amazing beginning to explore the real potential of online learning, now that someone did this experiment.

More Stanford classes

Apparently the success surprised a lot of people, both at Stanford and outside. Now they have announced about a dozen new classes for the 2012 Spring Semester at Stanford. This time not even just computer science but a lot of other interesting things. They are slightly delayed, supposed to have started this week (I have my note paper prepared) but now will do gradually in February-March. I still haven’t decided which ones to take, 3 of them last time took up a considerable amount of time, but there are just way too many cool ones:

- Technology Entrepreneurship, this I definitely going to, that’s where my future leads so let’s see what can I learn at this stage

- Making Green Buildings, architecture is awesome, and I like high tech designs, curious what they can teach

- Cryptography, this is an all time favorite topic

- Probabilistic Graphic Models, looking at the schedule there are too many good topics covered, lots of interactive computing and physical-computational world connection

- Design and Analysis of Algorithms I, algorithms are like puzzles, and there’s never enough of them

- Game Theory, something to understand the world a bit better and make better choices

- Natural Language Processing, covered a little in the AI and ML classes, just enough to get me all excited about its power

- Information Theory, being a physicist, I got a little of this, but just enough to see how powerful it can be and get me hungry

- Model Thinking, I used to say that complex planning is my favorite past time, this apparently can make me better at it

- Human-Computer Interaction, this can be very useful, these days technology enables so many new things in the topic and has a lot of hacking potential

- Anatomy, having a lot of medical doctor friends, I’d definitely would love to know more about this most amazing machine of ours, the human body.

Of course I can only take a couple of them, I just hope the videos will be available for the rest afterwards, so I can catch up with the interesting courses later on.

Udacity

Another development is about Udacity, an online university which was reported a few days ago: Professor Thrun apparently gave up his tenure at Stanford to start this initiative. He must be indeed very convinced about the future of the project to do this. And I think if anyone then he can follow through. Their first courses are Building a Search Engine and Driving a Robotic Car, both of which he has plenty of experience (he was on the team building Stanley, the self-driving car that won the 2005 Darpa Grand Challenge). I’m very curious of what will this become.

Khan Academy

Of course there’s one more big name in the online learning scene, Khan Academy, which is probably aimed at different audience, but also a much wider audience. I had a lot of fun with these other projects, so I want to see more what they are capable of. Salman Khan is also a very passionate speaker and that enthusiasm did rub off me somewhat.

Afterword

If someone wanted to learn by themselves they could always do that. Now, however, it feels it is easier than ever, and one can learn much higher level things than before. I can only wish that the society can become more educated and this more clever and resilient this way. Let’s see what I can do about that too.

(Edit: Just a little bit more about about the changing role and importance (or rather lack of importance) of universities, by Matt Welsh. This link is not agreement or disagreement but food for thought.)