Folding@Home popped up on my radar due to a recent announcement that their computational research platform is adding a bunch of projects to study (and ultimately help fight) the COVID-19 virus. Previously I haven’t had any good machine at hand to be able to help in such efforts (my 9 years old Lenovo X201 is still cozy to work with, but doesn’t pack a computing punch). At work, however I get to to be around GPU machines much more, and gave me ideas how to contribute a bit more.

Poking around the available GPU instance types on AWS, seen that there are some pretty affordable ones in the G4 series, going down to as low as roughly $0.60/hour to use some decent & recent CPU and an NVIDIA Tesla T4 GPU. This drops even further if I use spot instances, and looking around in the different regions, I’ve seen available capacity at $0.16-0.20/hour, which feels really in the bargain category. Thus I thought spinning up a Folding@Home server in the cloud on spot instances, to help out and hopefully learning a thing or two, at the price of roughly 2 cups of gourmet London coffee (or taking the tube to work) per day.

Looking at the instance types, there are a few others than the mentioned g4dn.xlarge to choose from, but going to stick with that for the time being:

- larger g4dn instances don’t really worth it, since the GPU will do the heavy lifting, and it’s the same size until going up to 12xlarge that comes with 4 GPUs, but that’s more than 4x as expensive, so would be rather wasted.

- Compute optimised p3 instances also don’t seem to particularly worth it, as the difference between its NVIDIA V100 and the T4 is much smaller multiplier than the price difference (based on a quick search for benchmarks: performance is roughly x2, while price of the smallest machine, that’s 2xlarge is x5-6).

Software setup

I’ve spun up an instance simply enough, and with a bit of trial & error got the setup sorted.

Using an Ubunutu system, the required fahclient installed just fine as per the documentation, but the GPU side needed some extra poking, things were unblocked by the NVIDIA drivers and OpenGL packages (thanks to the F@H forums), in my case:

sudo apt install -qy nvidia-headless-435 ocl-icd-opencl-devThe next was adding a good Folding@Home config, again a bit of trial and error. The docs say lots of the pieces can be left to self-configure (the folding slots in particular), but I’ve found that explicitly setting it works better overall. Thus my /etc/fahclient/config.xml file looks something like this:

<config>

<!-- Client Control -->

<fold-anon v='true'/>

<!-- Folding Slot Configuration -->

<gpu v='true'/>

<!-- Slot Control -->

<power v='full'/>

<!-- User Information -->

<passkey v='111111111111111111111'/>

<team v='xxxxx'/>

<user v='YYYYYYYYYYYY'/>

<!-- Folding Slots -->

<slot id='0' type='CPU'/>

<slot id='1' type='GPU'/>

<allow>127.0.0.1 A.B.C.D</allow>

<web-allow>127.0.0.1 A.B.C.D</web-allow>

<!-- Remote Command Server -->

<password v='zzzzzzzzz'/>

</config>Here I omitted my user name and passkey (naturally), so fill others can fill in their own. I’ve also joined the ArchLinux team (number 45032 ;), but to each of their own. The last part in allow/web-allow section is that I’ve added my VPN’s IP address, so I can connect to the server remotely, without opening it up to the rest of the world. That part (A.B.C.D) can be removed, and could, for example, use SSH port forwarding to connect to the server (forwarding the required port 7396). Finally, the password section allows the remote FAHControl graphical interface to connect to the folding service remotely (without port forwarding).

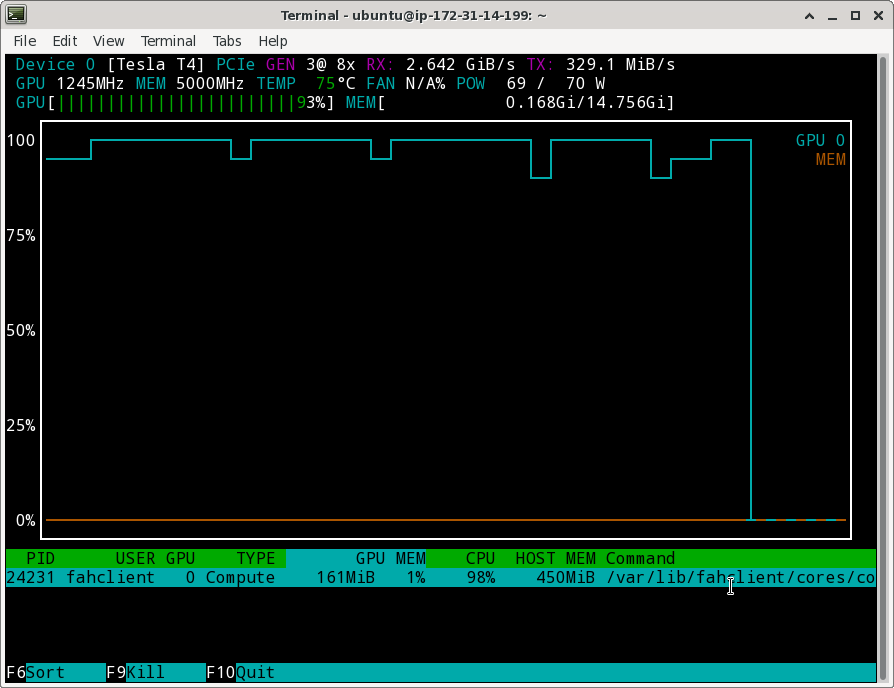

This setup then got to fold. To ensure that things were running on the GPU fine, I’ve also built nvtop on the machine and checked that the unit is maxed out

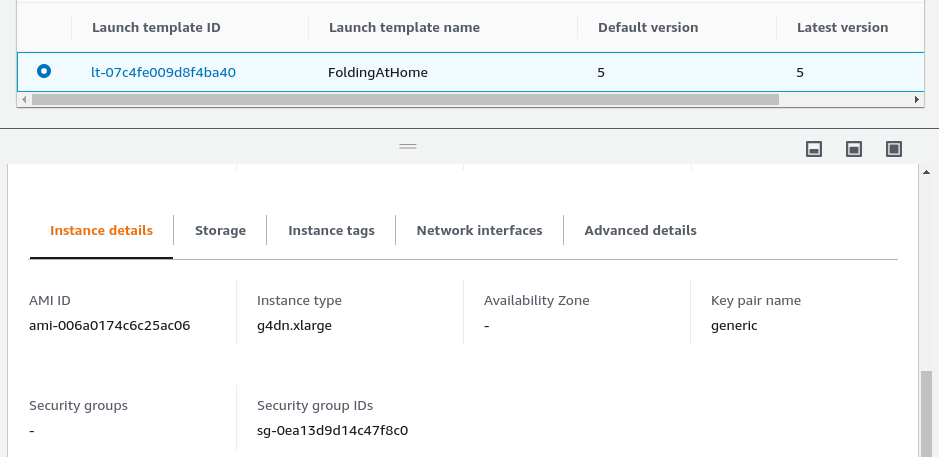

Launch Template

So far it’s fine, but let’s make things more automatic. Spot instances can be killed, or I might want to spin up some extra instances, and would rather have as little manual work to do as possible. What I converged on then is having a Launch Template which sets up all the things needed and I could start a new folder with a couple of clicks. In there I’ve set:

- the instance type, g4dn.xlarge

- an Ubuntu 18.04 system

- the security group, that allows all traffic to my VPN (otherwise port 22 for ssh would be enough with the mentioned ssh tunneling above)

- that these are spot requests

- my default AWS keypair for ssh access

- some tags for housekeeping (definitely optional)

- user data that does the whole setup on on system start

Of the parts above, I guess naturally the user data took the most to figure out, because of some peculiarities of the setup.

First, FAHClient keeps wanting to interactively set things up when it is installed, so had to get around that. If I pre-create the correct config.xml file before the install, fortunately only a single question remains (whether it should start the service on automatically) and that one thing is taken care buy a bit of expect scripting.

#!/bin/bash

export DEBIAN_FRONTEND=noninteractive

sudo apt update

sudo apt install -qy nvidia-headless-435 ocl-icd-opencl-dev expect

wget https://download.foldingathome.org/releases/public/release/fahclient/debian-stable-64bit/v7.6/fahclient_7.6.9_amd64.deb

sudo mkdir /etc/fahclient/ || true

sudo chmod 777 /etc/fahclient

cat <<EOF > "/etc/fahclient/config.xml"

<config>

<!-- Client Control -->

<fold-anon v='true'/>

<!-- Folding Slot Configuration -->

<gpu v='true'/>

<!-- Slot Control -->

<power v='full'/>

<!-- User Information -->

<passkey v='9c6306b5e237ab269c6306b5e237ab26'/>

<team v='45032'/>

<user v='Gergely_Imreh'/>

<!-- Folding Slots -->

<slot id='0' type='CPU'/>

<slot id='1' type='GPU'/>

<allow>127.0.0.1 62.212.77.217</allow>

<web-allow>127.0.0.1 62.212.77.217</web-allow>

<password v='guardian'/>

</config>

EOF

# This new FAHClient version might not get GPUs.txt properly, load it

curl https://apps.foldingathome.org/GPUs.txt --create-dirs -o /var/lib/fahclient/GPUs.txt

sudo chmod -R 755 /var/lib/fahclient

cat <<EOF > "/home/ubuntu/install.sh"

#!/usr/bin/expect

spawn dpkg -i --force-confdef --force-depends fahclient_7.6.9_amd64.deb

expect "Should FAHClient be automatically started?"

send "\r"

# done

expect eof

EOF

chmod +x /home/ubuntu/install.sh

sudo /home/ubuntu/install.shWith this script passed to the instance as user data now it all falls into place, and can spin up new folding any time.

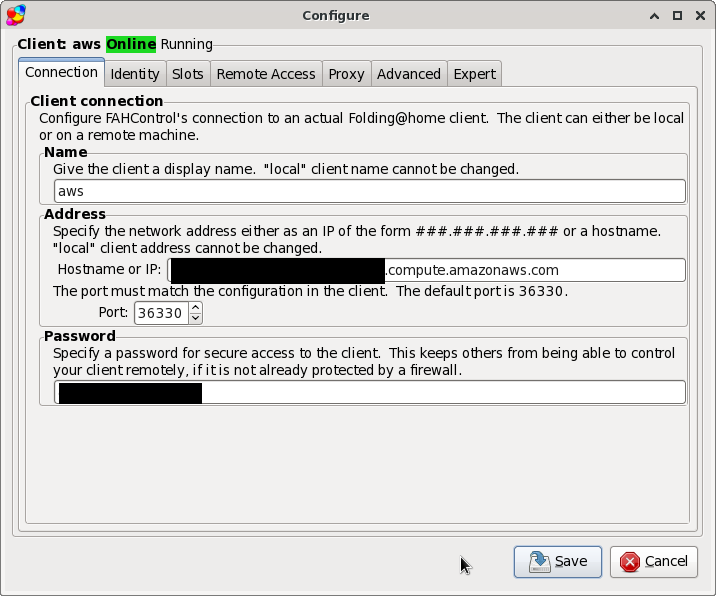

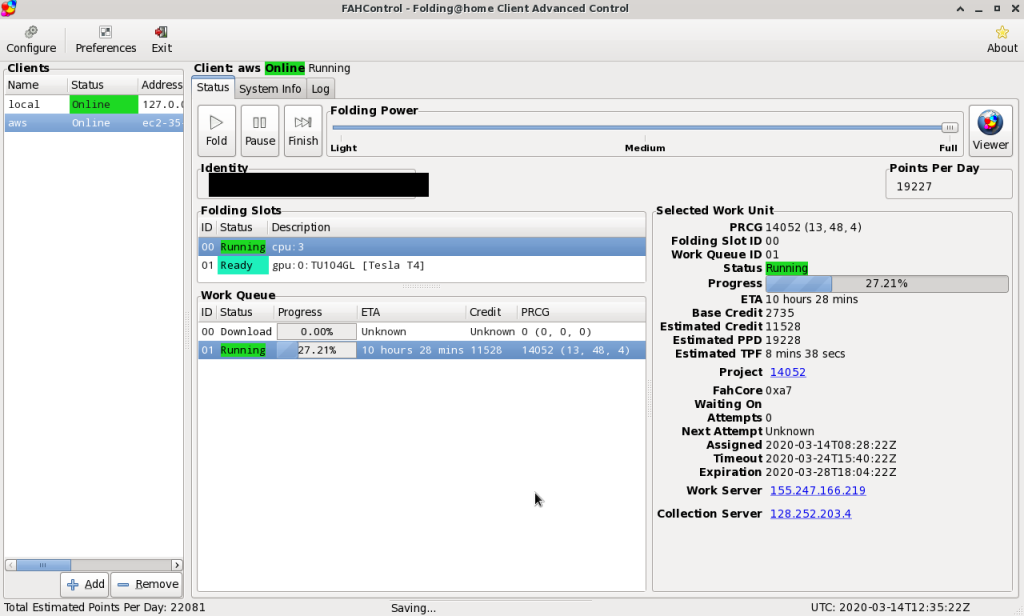

Then there are two ways to connect to the server and monitor it remotely:

- the web client, on port 7396, with an interface like at the top of this post, or

- using FAHClient desktop client, that can monitor and control multiple folding instances, and I feel has better control over & more information about what’s being done. This is by default on port 36330 and to work remotely, have to have a “password” set in the configuration.

Using these settings, the remote workload (both the CPU and GPU pops up, and possible to monitor & control:

And this should be done for now…

Notes & Future

Thus far I’ve learned:

- A bit about spot instances. There are a lot more options which I haven’t touched and might be useful in general, such as targets & instance pools, using the time-limited spot instances, etc, but those are more in general, not in this particular case)

- A lot about launch templates. They seem handy, though one request would be being able to edit the description of them, or when starting from a previous version, that description is pre-filled (which is currently not, unlike all the other settings).

- Some apt/dpkg coercion tricks for non-interactive setup, though there seems to be a more to know. How nice it is on ArchLinux that non-interactive mode is basically a single

-yflag away in pacman. - How to use user data, though that’s definitely just scratching the surface. What would be much better is to learn cloud-init instead, which seems much more like the proper way to supply files to install and scripts to run on these virtual machines.

I’ve also experienced that Folding@Home might be struggling a bit with the current load, earlier today the work servers (but now they seem to be okay), but also the statistics servers, so I’m guessing the whole infrastructure is under load. I wonder how are they set up, and where their bottlenecks are…

But now this is done, the ball is in the court of the research, keep them computational biochemistry research coming. In the meantime keep safe, everyone. Wash hands, not touch faces, and take good care of people around you.

Edit 2020/03/14: Looking at their server stats and connecting up the dots with their project stats, they might have run out of relevant work items for the time being. That’s kinda both good (likely large response to their shout out) and a bummer (resources just idling).

Edit 2020/04/19: Updated the template user data script to install the the newer 7.6.9 version of FAHClient (instead of 7.4.4), which also needs manually loading the GPUs.txt file, because it doesn’t seem to do that by itself…

10 replies on “Folding@Home on AWS to kick the arse of coronavirus”

Folding@Home has been hammered into massive overload on not having work to go around for a couple of WEEKS now.

It’s nice to want to be able to help, but it’s a waste right now – they’re trying to get their infrastructure AND THE AMOUNT OF WORK AVAILABLE built up to handle the BALLPARK 100 TIMES AS MANY FOLDERS as they had before the COVID assist request, but they just can’t generate work units to keep the massive response BUSY.

Yeah, definitely agree that there’s more people helping than how much work needing to be done. I’ve stopped doing running my setup for quite a while now, but feels like a good learning experience, and easy to spin up things again, when there’s more to do in the future. It is good to keep an eye on their stats at https://apps.foldingathome.org/serverstats where it shows up a bit when the relevant servers are just not functioning well. It’s not super straightforward, though, sometimes things look good, but don’t work in practice. I guess this should stabilise over time.

[…] for this code go to Gergely Imreh who wrote an article about setting up Folding@Home on […]

Consider using the newer, non-beta package for this:

debian-stable-64bit/v7.5/fahclient_7.5.1_amd64.deb

Set fold-anon to false to ensure you get credit.

Good point, thanks, will give that a try!

Bumped version to the latest 7.6.9, though that needed some workaround (as it doesn’t seem to get GPUs.txt by itself on startup, and that just blocks any proper running and GPU tasks). I’m all for updating software versions, the bugs can be quite annoying, though.

I found it a lot easier to simply to create an AMI of a configured machine than to hack around the FAH installer, just make sure to snapshot it when it has no work units. Also, I created a cron that uses `nvidia-smi` to see if FahCore_22 is running and restarts FAHClient if it isn’t. The client is a lot more successful on getting new WUs on startup.

That works as well, I found this nice low-touch for my use cases, but yours is definitely more reproducable.

I’m guessing the cron-job is more successful due to the exponential back-off of the client when it cannot get a job. After a few tries, that time becomes quite long, and restarting short-circuits that. I guess that would need some other debugging of the cron parts whether things run correctly, so there’s a bit of trade-off.

Thanks for the insights in your ways of doing things, though!

Thanks for the write-up. I’ve been playing with a spot p3.2xlarge on Ubuntu 20.04 (ami-0e84e211558a022c0). I’m installing only fahclient_7.4.4_amd64.deb since I have FAHControl running on my local machine to see how it’s doing. nvidia-headless-435 was already installed on my image, but after updating the config.xml, restarting FAHClient, and being patient (~10 minutes) the GPU started cranking. About 4.5M PPD!

It sounds like great news for science! Thanks for dropping a note here! :D