Recently I’ve been experimenting with the Scratch programming language, created by the Lifelong Kindergarten Group at MIT. It’s a fun environment that uses visual programming: drag-and-drop pieces of code blocks, and control objects on a stage, and the stage itself. It has quite a bit more depth to it, than the expression “visual programming language” implies, with it’s internal messaging system, multitasking, and event-driven approach. While it was originally aimed at creating interactive graphics and animations (see this TEDx talk by Prof. Mitch Resnick on the background), it is now evolving into new territories with the Scratch Experimental Extensions.

The Experimental Extensions define a smallish API, which can be used to create custom programming blocks to be used in Scratch. All it needs is a Javascript file (currently) hosted on Github with a few pre-defined code blocks to unlock any sort of functionality. This creates a possibility to easily tap into any kind of web API or interface any hardware that can be addressed somehow through Javascript. There are a large number of examples already, and I wanted to see how easy or hard it is to create such an extension for a robot that happened to be around here at VIA.

The Robot

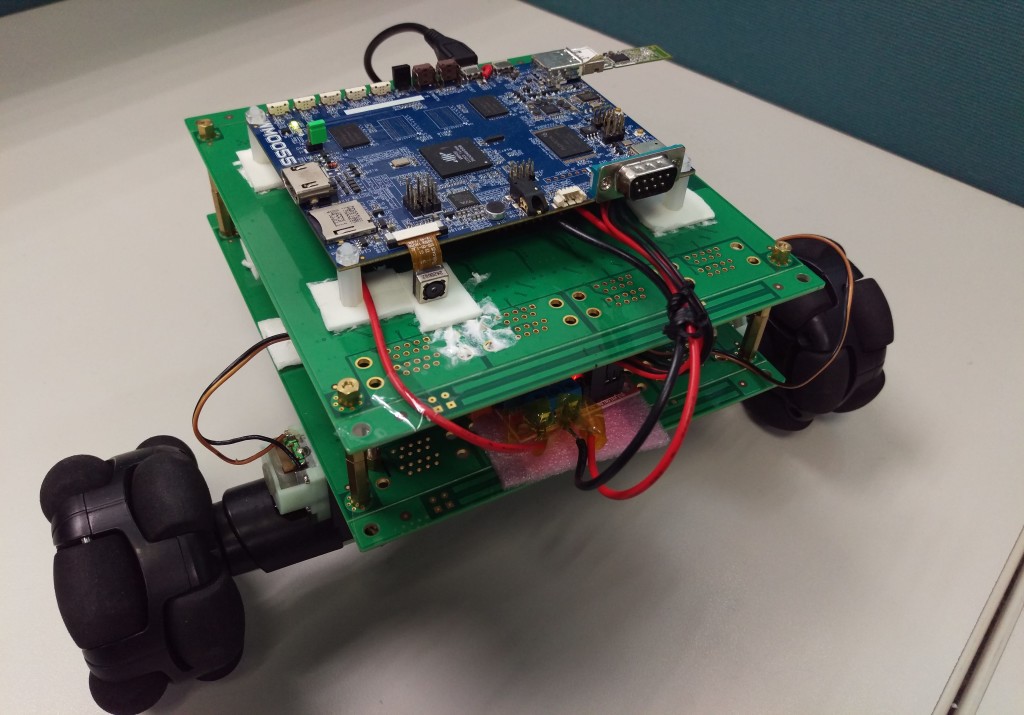

Some of the engineers at VIA have built a small robot using bits and pieces found in the lab: a dual-core ARM-based engineering sample control board, smart battery from another project, some wheels from a commercial robot (Rovio’s 3-wheel system a few years back), and I think some office supplies.

The robot’s operating system creates an access point (with the Wi-Fi USB stick on the upper right of the picture), and hosts a webpage that can be used to control the movements (back/forth/left/right), as well as streaming video (or more precisely Motion JPEG) from the camera in the front (lower left, the little black square on top of the white sticky pad). Besides the control board, there’s some voltage converter and battery-control circuits, off-the-shelf pieces by the look of them, with minor modifications (such as an LED to see when the battery is out of power).

It is as hacky as it gets, but all that is needed to have some fun.

Controlling Through Scratch

While the built in webpage to control the robot is very handy, and can be used from any device that has a browser (computer, smartphone, I guess could even control it from a Kindle), it has it’s limitation: I need to click on buttons to get things done, and nothing can be really automated. Still, the underlying API is a good start to do more interesting things.

The API is very hacky too, and that’s just fine like that. Looking at the built in webpage, I could see that there are a handful of GET requests to a specific URL to move_forward , or turn_left or stop . That’s straightforward enough, and it does not take more than a couple of minutes to cook up a proof of concept Python script using requests to try these endpoints.

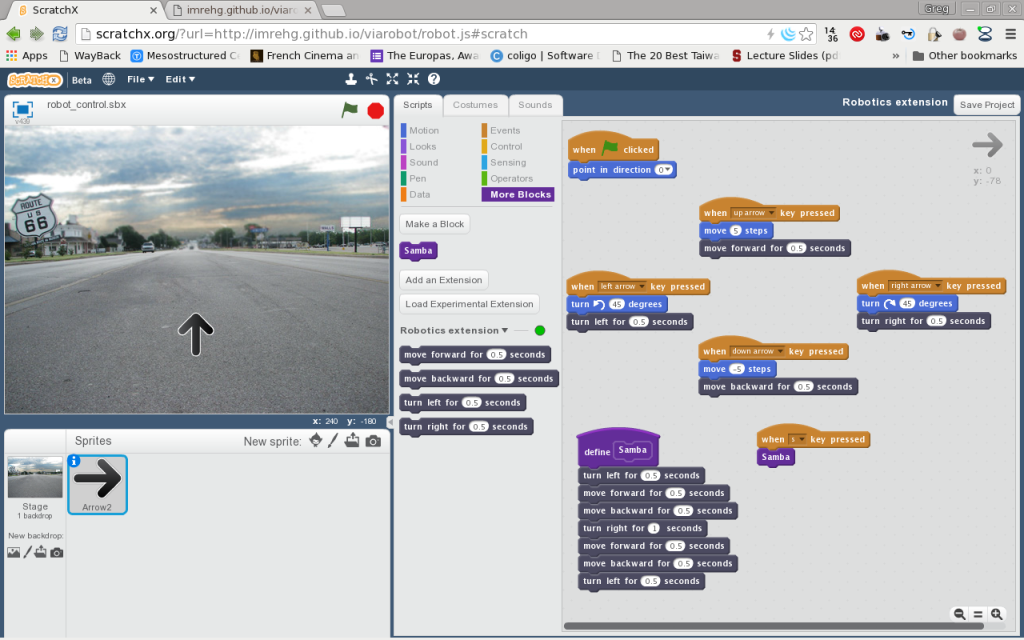

Once that worked, I followed the Scratch Extension documentation and some of the examples linked from the site to creating a Javascript module to implement these movement commands.

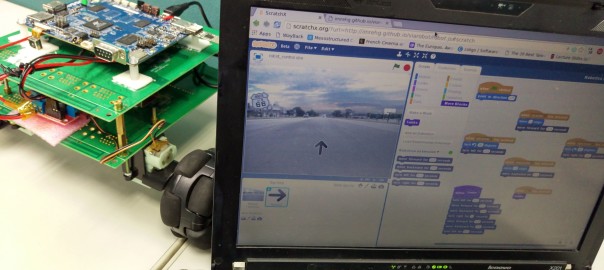

It didn’t take too many iterations to add the blocks like “move forward for X seconds” and so on for the other movements. I’ve created a basic project to show how these work, where the robot moves following the directional arrows: forward/backward, and turning with left/right. Figured out that 0.5 turning is about 45°, so the arrow in the screen can sort of follow the direction of the robot in the physical world. For good measure I’ve also added a complex block of “samba” steps (I’m sorry, haven’t been doing samba for years, but that’s what it reminds me of), which is triggered by pressing “s” on the keyboard. Here’s a demo of the robot controlled through Scratch:

The source code naturally is available on Github, both the module and an example Scratch project.

Lessons Learned

Provided that you have a bit of Javascript knowledge, it’s super easy to create new scratch extensions. The platform is very quite well thought out and flexible. That code can be so easily included from public sources is very handy. They also make jQuery available from within the scripts so that helps with simplifying code generally (especially working with web APIs).

The experience also showed me how important is the firmware in the case of hardware control. For example the current firmware on the robot never thought of their API being called outside of the browser, thus I’ve run into “Access-Control-Allow-Origin” issues. It doesn’t make things unworkable, but something that needs to be worked around. There are other robots that have extensions available, as well as an extension to control Arduino: that’s also available only because of a specific firmware, Firmata to create a proper API for controlling the boards.

Since the Javascript modules need to be committed to git, pushed to Github, and the Scratch page reloaded, it helps a lot to run the module through jslint to catch at least the syntax errors (or have an IDE that can do that for you).

Future Ideas

I got enough taste of Scratch to come back for more. There are a couple of ideas that I’d like to figure out in the future, specific to this robot project and otherwise:

I’m not using some of the reserved functions of Scratch Extension modules, the _getStatus() function in particular, that could show whether or not the robot is connected. Should add that functionality to this robot module, though it is not yet clear to me how things should work.

Would be great to be able to bring the video stream into Scratch. I see functionality to enable the webcam on the computer, but the modules can’t control graphics as far as I can tell yet. This would enable a lot of interesting use cases. Especially if coupled with transparent overlays. I can imagine video streams from the robot as a cockpit image, or adding facial expressions to a “matchstick person” using a webcam and image processing…

Scratch is also very good with internationalization (i18n), as it has translations in dozens of languages. The current extensions cannot be translated yet, as far as I can tell, so every extension is just single language. It could be interesting, and likely demanding, to add for example gettext style translations to the extensions, one way i18n is done in lots of projects these days.

Have you used Scratch for any of your projects? Have you made an extension for it or thinking about making one? Would love to hear your experience!